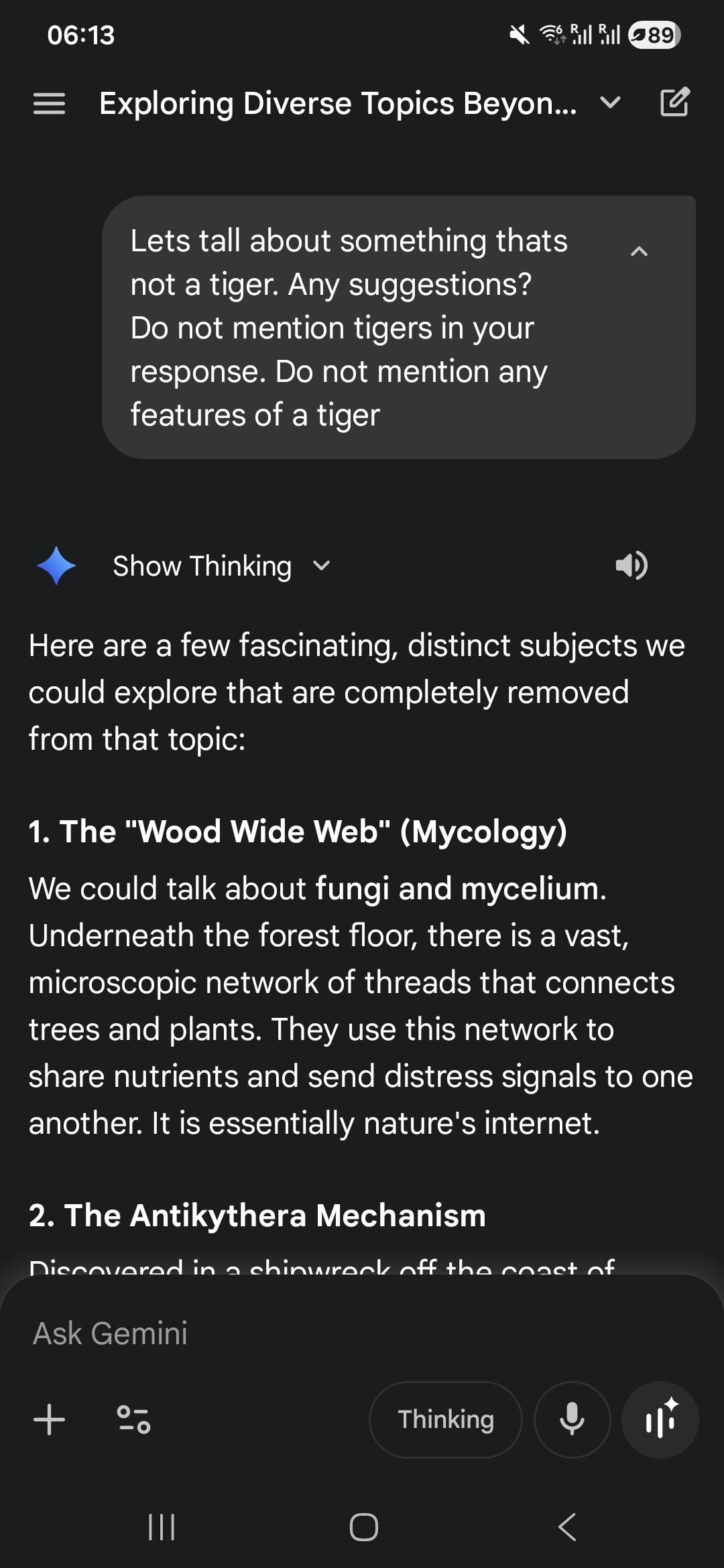

I wanted to showcase this example as a counter argument to the "do not think about a pink elephant" argument.

The model had no problem not talking about a concept, but did use the word "tiger" a few times in its reasoning. LLMs are semantic, and not simple pattern matching machines which are incapable of understanding "do not", even though they struggled more with this in the past.

Full conversation: https://g.co/gemini/share/3f57ca77ecae

So why does gemini generate a video when I ask it not to, but have no problems talking about a tiger? I dont know, but its weird and inconsistent. Its interesting to see that other people experience similar strange behavior.

Ps: even included a typo here also, it really does not matter. Also, let's keep this discussion constructive.