The goal was just to see who performs like a real dev.

here's my takeaway

Opus 4.5 handled real repo-issues the best. It fixed things without breaking unrelated parts and didn’t hallucinate new abstractions. Felt the most “engineering-minded

GPT-5.1 was close behind. It explained its reasoning step-by-step and sometimes added improvements I never asked for. Helpful when you want safety, annoying when you want precision

Gemini solved most tasks but tended to optimize or simplify decisions I explicitly constrained. Good output, but sometimes too “creative.”

On Refactoring and architecture-level tasks:

Opus delivered the most complete refactor with consistent naming, updated dependencies, and documentation.

GPT-5.1 took longer because it analyzed first, but the output was maintainable and defensive.

Gemini produced clean code but missed deeper security and design patterns.

Context windows (because it matters at repo scale):

- Opus 4.5: ~200K tokens usable, handles large repos better without losing track

- GPT-5.1: ~128K tokens but strong long-reasoning even near the limit

- Gemini 3 Pro: ~1M tokens which is huge, but performance becomes inconsistent as input gets massive

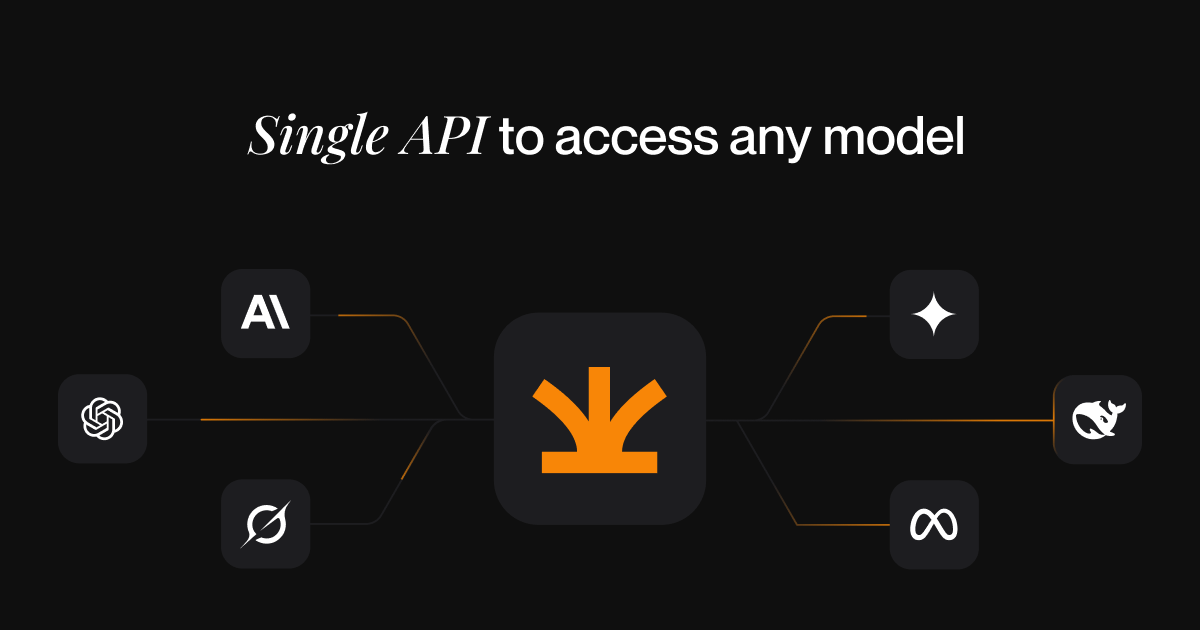

What's your experience been with these three? Used these frontier models Side by Side in my Multi Agent AI setup with Anannas LLM Provider & the results were interesting.

Have you run your own comparisons, and if so, what setup are you using?